Sometimes, “best practices” turn into “bad practices”. And recently, with one of the best new features of Business Central that just fell in our laps (most users probably won’t realize), one good practice became not just unnecessary .. but even a bad practice.

When I asked CentralQ. I got a lot of truth, but also (what I believe) misinterpreted stuff. So – lets write a post, and see if CentralQ picks it up 🤪.

As said, this is all about the new “Tri-State Locking” feature, which was already quite well explained on the blog of Mads Gram here: Tri-state locking – BC Internals, but also on Learn: Tri-state locking in database – Business Central | Microsoft Learn.

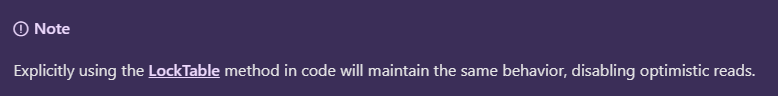

And as such, there isn’t anything much to contribute .. until you read “the small letters”. Well. Not really “small letters” – it’s an actual note on Learn:

The topic of this post is actually all in that one comment. That one “note”. For me, it’s not just a “note”, it’s a complete turnaround of a best-practice-turned-into-a-bad-practice.

Let me try to explain …

Pre v22

So – previously, all we knew for so many years, was – what I call – “pessimistic locking”:

Once a transaction wrote anything to a table, any read-operation on that table, would simply lock (UPDLOCK) until the end of the transaction.

That’s all we knew. That’s all there was. That was default behaviour. We disliked it – but we learned to live with it. All we could do, is to be as efficient as at all possible. This meant:

- to do the least amount of database operations,

- To do the least amount of SQL round trips.

- Delay any locks until the end of the transaction

- Make transaction as short as possible

- …

This also meant: when a developer knew that he/she was going to need a lock, we should explicitly do that. It was good practice.

Now – we have a few read-operations, and only on “FindSet” we could provide a “lock-hint” (with FindSet(true)). When we’d do a “Get”, or “Find(‘-‘)” (yes, there are still reasons to use it), or FindFirst, or FindLast, or even IsEmpty or CalcSums, or … the only way for us to make sure to lock the records, was to use LockTable.

Up until today, some people believe that “LockTable” will lock the table – NO – it wasn’t going to do that. It was simply add an UPDLOCK to that next SELECT-query in SQL.

That was it – a simple practice, a good practice, to use LockTable whenever needed, to lock, because you wanted to lock the records that you were reading, probably because you wanted to change them. There are other reasons, but let’s not go into those.. .

Still .. do know .. everything was still pretty much “pessimistic”: we could add UPDLOCK to every read-operation, but we couldn’t take them away anymore (other then committing the transaction (and no, usually, that’s not what we would like to do (I’d like to keep my fingers 🤪))).

That changed in v22

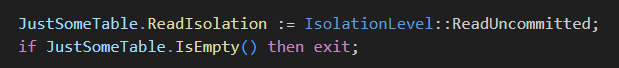

Since v22, we had this new “ReadIsolation” statement, where developers have total control over all their read-operations. Super-duper-cool, because now, we can’t just “add” UPDLOCK, we can control every single read-operation, and AVOID locking further down the transaction. All of a sudden, we all have every single read-operation under control, and it’s not fully controlled anymore by this default “pessimistic” behaviour.

Like – just imagine – when a transaction already wrote to a table, every “IsEmpty” or every “CalcSums” on that table, would Lock every single record that was part of that calculation. And let’s be honest – calculating a sum, we usually don’t want to change those records, do we? Same for “IsEmpty”, right? So all these were unnecessary locks, that FINALLY, we were able to do something about that, like:

No locks .. . FINALLY. This saved us quite some DeadLocks, to be honest.

But ….

This still would require us to have a look at all our read-operations. Microsoft figured .. there is more to gain. So …

Since v23

We have Tri-state locking. A feature we simply enable in SaaS in the Feature Management Page

One word: enable it! Like Starsky would say …

BUT – remember the “Note” we had above?

In a nutshell – whenever we have used “LockTable” in a transaction, the Tri-state locking becomes a pessimistic Two-state locking again, and we lose all benefits again!

I understand that Microsoft wants to play it safe: if we “LockTable”, we want to lock. Sure. But. Personally, I can’t come up with a use case to “want” to lock the entire transaction, and everything extended to it .. out of my scope. I usually want to lock the reads for that particular loop .. not pessimistically in the future in other procedures/variables..

So – as a general rule – I’d dare to say:

- if your code is full of “Locktable” – get them out, replace all of those with a decent ReadIsolation (probably “UpdLock”)

- Add “ReadIsolation” to all read-operations!

- If you have IsEmpty in code – search for them, and consider which ReadIsolation fits best of you. ReadCommitted, or even ReadUncommitted will do just fine in most (if not all) cases.

- Same for CalcSums – search for them, and consider which ReadIsolation fits best of you. ReadCommitted, or even ReadUncommitted will do just fine in most (if not all) cases.

Code Review

May be to look from it from a Code Review Angle:

All this did change how we review code. There is a lot we look at, but in scope of this post, we now for sure demand a “ReadIsolation” for any database read and reject LockTables from new code.. .

Stefan, Arthur – may be you can add it on the list of the LinterCop 😉? – Lol, while writing this post, I checked their rules, and they even already added rule LC0031, totally in line with this post ;-). If you haven’t checked out the BC LinterCop: check here: StefanMaron/BusinessCentral.LinterCop: Community driven code linter for AL (MS Dynamics 365 Business Central) (github.com)

Resources

You want to read more about Tri-state locking? Here are some resources:

4 comments

1 ping

Skip to comment form

Waldo,

Nice blog! Always good to shake the tree 😛

about the “So – as a general rule – I’d dare to say:”

If you almost have no locktable (because it has been changed to ReadIsolation)

and tristate is enabled, reads on a table that did a modify in your session will not use UPLOCK but readcommitted.

So i do not see the actual use for Point 3 (isempty) and point 4 (calcsums) unless i actually see a place if any in Telemetry about lock timeout , long running SQL or Deadlock

Or do you still have allot of cases where you cannot get rid of the locktable?

Author

Thanks for your comment :-).

Well – you never know, do you? With extensibility in mind, you don’t know of anyone has set a LockTable to that same table, anywhere in the transaction.. . I just find it a good practice to not think IF you should, but just think WHAT is the right isolation in this point in time.. .

Great article!

While I appreciate this new setting (and applaud it), it does seem to support bad coding practice. Shouldn’t writes be done once all reads are complete? I’m trying to think of a use case where I would need to read information after I started a write transaction but it is difficult to think of one. I only ask because I might be missing something here

Author

Well, it’s just a language that’s now a bit more optimistic in stead of very pessimistic. It doesn’t change anything on how people “should” developer, or what coding practices should be.

Writes should still be done once all reads are complete – sure.

But do know, in an extensibility-world (who is extending my app? / who else has extension points where I have extended the base app? Who comes first? … ), we are not that much in control anymore – so I’m all for this more optimistic approach 😉

[…] Rec.LockTable – Good Practice? Or Bad Practice? […]