I have yet another option for you that might be interesting for you to handle the artifacts that Microsoft (Freddy) is providing in stead of actual Docker Images on a Docker Registry.

What changed?

Well, this shouldn’t be new to you anymore. You must have read the numerous blogposts from Freddy announcing a new way of working with Docker. No? You can find everything on his blog.

Let me try to summarize all of this in a few sentences:

Microsoft can’t just keep providing you the Docker images like they have beein doing. With all the versions, localizations .. and mainly the countless different hosts (Windows Server, Win 10, Windows updates – in any combination) .. Microsoft simply wasn’t able to upkeep a stable and continuous way to provide all the images to us.

So – things needed to change: in stead of providing images, Microsoft is now providing “artifacts” (let’s call them “BC Installation Files”) that we can download and use to build our own Docker images. So .. long story short .. we need to build our own images.

Now, Freddy wouldn’t be Freddy if he wouldn’t make it as easy as at all possible for us. We’re all familiar with NAVContainerHelper – well, the same library has now been renamed to “BcContainerHelper”, and contains the toolset we need to build our images.

What does this mean for DevOps?

Well – lots of your Docker-related pipelines were probably going to download an image, and using that image to build a container. In this case, you’ll not download an image, but simply see if it already exists. And if not, build an image, and afterwards build a container from it, which you can use for your build pipeline in DevOps.

Now, while BCContainerHelper has a built in caching-mechanisme in the “New-BCContainer” cmdlet .. I was trying to find a way to have stable build-timings .. together with “not having to build an image during a build of AL code”. And there is a simple solution for that…

Schedule a build pipeline to build your Docker Images at night

Simple, isn’t it :-). There are only a few steps to take into account:

- Build a yaml that will build all images you need

- Create a new Build pipeline based on that yaml

- Schedule it every night (or every week – whatever works for you)

As an example, I built this in a public DevOps project where I have a few DevOps examples. Here are some links:

- WaldoDevOpsDemos project on DevOps

- The repo with the yaml to build the images

- The yaml

- The build pipeline based on that yaml

The yaml

Obviously, the yaml is the main component here. And you’ll see that I made it as readable as possible:

name: Build Docker Images pool: WaldoHetzner variables: - group: Secrets - name: DockerImageName.current value: bccurrent - name: DockerImageName.insider value: bcinsider - name: DockerImageSpecificVersion value: '16.4' - name: DockerArtifactRetentionDays value: 7 steps: # Update BcContainerHelper - task: PowerShell@2 displayName: Install/Update BcContainerHelper inputs: targetType: 'inline' script: | [System.Net.ServicePointManager]::SecurityProtocol = [System.Net.ServicePointManager]::SecurityProtocol -bor [System.Net.SecurityProtocolType]::Tls12 install-module BcContainerHelper -verbose -force Import-Module bccontainerhelper - task: PowerShell@2 displayName: Flush Artifact Cache inputs: targetType: 'inline' script: | Flush-ContainerHelperCache -cache bcartifacts -keepDays $(DockerArtifactRetentionDays) # W1 - task: PowerShell@2 displayName: Creating W1 Image inputs: targetType: 'inline' script: | $artifactUrl = Get-BCArtifactUrl New-BcImage -artifactUrl $artifactUrl -imageName $(DockerImageName.current):w1-latest # Belgium specific version - task: PowerShell@2 displayName: Creating BE Image inputs: targetType: 'inline' script: | $artifactUrl = Get-BCArtifactUrl -country be -version $(DockerImageSpecificVersion) New-BcImage -artifactUrl $artifactUrl -imageName $(DockerImageName.current):be-$(DockerImageSpecificVersion) # Belgium latest - task: PowerShell@2 displayName: Creating BE Image inputs: targetType: 'inline' script: | $artifactUrl = Get-BCArtifactUrl -country be New-BcImage -artifactUrl $artifactUrl -imageName $(DockerImageName.current):be-latest # Belgium - Insider Next Minor - task: PowerShell@2 displayName: Creating BE Image (insider) inputs: targetType: 'inline' script: | $artifactUrl = Get-BCArtifactUrl -country be -select SecondToLastMajor -storageAccount bcinsider -sasToken "$(bc.insider.sasToken)" New-BcImage -artifactUrl $artifactUrl -imageName $(DockerImageName.insider):be-nextminor # Belgium - Insider Next Major - task: PowerShell@2 displayName: Creating BE Image (insider) inputs: targetType: 'inline' script: | $artifactUrl = Get-bcartifacturl -country be -select Latest -storageAccount bcinsider -sasToken "$(bc.insider.sasToken)" New-BcImage -artifactUrl $artifactUrl -imageName $(DockerImageName.insider):be-nextmajor # Images - task: PowerShell@2 displayName: Docker Images Info inputs: targetType: 'inline' script: | docker images

Some words of explanation:

- Pool: this defines that pool where I will execute it. I know this will be executed on one DevOps build agent. This is important: as such, if you have multiple agents in a pool, you actually need to make sure this yaml is executed on all agents (because you might need the Docker images on all agents). Yet, this indicates that when you work with multiple build agents, this might not be the best approach.. .

- Variables: I used a variable group here to share the sasToken as a secret variable over (possibly) multiple pipelines. The rest of the variables are quite straight forward: I’m not using the “automatic” naming convention from the BcContainerHelper, but I’m using my own. Not really a reason for doing that – it just makes a bit more sense for me ;-).

- Steps: I’ll first install (of upgrade) the BcContainerHelper on my DevOps Agent and flush the artifact cache if too old (7 days retention). Next, I’m simply using the BcContainerHelper to create all images that I will need for my configured pipelines. You see that I have an example for:

- A specific version

- A latest current release version

- A next minor (the next CU update)

- A next major (the next major version of BC)

Schedule the pipeline to run regularly

Creating a pipeline based on a yaml is easy – but scheduling is quite tricky. Now, there might be a better way to do it – but this is how I have been doing it for years now:

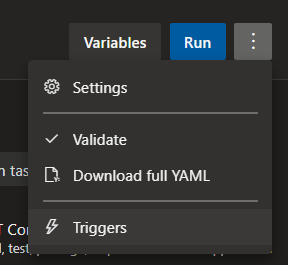

1 – When you edit the pipeline, you can click the tree dots on the top right corner, and click “Triggers”

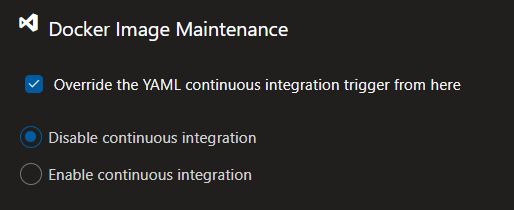

In the Triggers-tab, override and disable CI (you don’t want to run this pipeline every time a commit is being pushed)

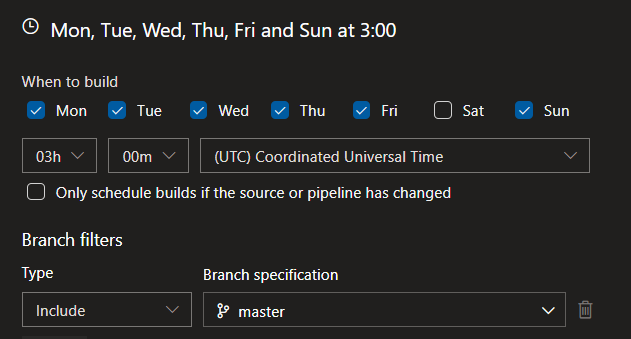

Then, set up a schedule that suits you to run this pipeline, like:

And that’s it!

ALOps

If you’re an ALOps user, this would be a way to use the artifacts today. Simply build your images, and use it with the ALOps steps as you’re used to.

We ARE trying to up the game a bit, and also make it possible to do this inline in the build (in the most convenient way imaginable), because we see it necessary for people that are using a variety of build agents, which simply can’t be scheduled (as they are part of a pool). More about that soon!

4 comments

2 pings

Skip to comment form

Some tips:

1) See https://docs.microsoft.com/en-us/azure/devops/pipelines/process/phases?view=azure-devops&tabs=yaml#multi-job-configuration for Matrix job

2) See https://docs.microsoft.com/en-us/azure/devops/pipelines/yaml-schema?view=azure-devops&tabs=example%2Cparameter-schema#scheduled-trigger for cron trigger (scheduled trigger)

😉

Author

I knew you would do that :D.

Thanks for the info! I’ll give it a try!

Did you change anything in the normal pipelines? We can’t seem to make it work with New-BCContainer. Either Azure is requesting a docker login, freezes completly after telling us the Containerhelper version or is modifiying the pre-built images making them unusable for the next pipeline run

New-BCContainer

with only provided artifact freezes the pipeline until timeout

with only provided imagename requires docker login (pull access denied)

with both provided destroying the original image

Author

We are using an ALOps variant, not BcContainerHelper, so I don’t know if it still works or not.

For any BcContainerHelper-related issues, I strongly recommend you to file an issue here: https://github.com/Microsoft/navcontainerhelper

It might very well be that there were breaking changes since last update if BcContainerHelper (happened before) – always upgrade to newest version, see if script still works, if not: fix ;-).

[…] Using DevOps Agent for prepping your Docker Images […]

[…] Source : Waldo’s Blog Read more… […]