Not too long ago, I did a webinar for ALOps. The idea of these webinars is simple: how can you get started with DevOps for Microsoft Dynamics 365 Business Central. And I’m not going to lie: I will focus on doing that with ALOps. But that’s not the only focus of these videos – I will touch lots of stuff that has nothing to do with ALOps, but more like strategies, simple “how to’s” and so on – which should be interesting to anyone that is trying to set up DevOps in any way. The webinars will end up on YouTube, and in the description of the video, I will try to give an overview of the handled topics, and create direct links to the exact place in the video. Here is an example of the description-section of the first video:

Now, In that webinar, I explained just in a few minutes how to setup a build agent for DevOps. And actually, there is more to say about that – so I’d like to extend on that a little in this blogpost.

What is a DevOps agent?

Just think of it: whatever you define as a build, whatever you will define as a release – scripts need to be executed on some kind of environment by “some service on some server”. A DevOps build agent is this “some service”: a service that is installed on “some server” that will execute the steps that you define in a pipeline.

How you do that?

Before we go into that, let’s first talk about WHAT you will need. And that is “Docker”. I strongly believe that if you don’t build with Docker, you’re building the wrong way. It would mean there is some kind of database/serverinstance that is waiting for you to build and such – which would have had to been cleaned every start of a new pipeline (take schema-changes into account and such..). While building a docker container every start of a pipeline would mean you have a clean isolated environment every single build. You can’t get any more stable/isolated/encapsulated than that.

So .. long story short – let’s assume we all use docker for our build pipelines .. so in that perspective .. our server with DevOps agent needs to be able to:

- Use docker

- Setup containers that have a certain localization and version of Business Central

Well – now we know that – let’s review our options that we have for creating a DevOps agent:

- Microsoft-Hosted agents: Not really my favourite. Microsoft has agents ready for you to use. First, it seems very interesting and secure, but there are challenges:

- You have docker, and in many pipeline runs, the same image can be used. But since the Microsoft Hosted Agent (VM) is discarded after one use (which is secure and all, sure), your next run will again have to pull that image from the docker repo .. No way to reuse docker images.

- If something goes wrong, there is no way for you to fix / investigate / replicate the problem on the agent (no way to remotely log in)

- Slow: not because of resource (that’s quite ok), but because of the fact that you’ll have to download the entire docker image every single time

- Security limitations: it could be that you run into PowerShell/Security limitations that you don’t have under control, like local file access, the ability to download license in a secure way, .. these things.. . It’s hard to specify which exactly, but if it happens, it is difficult to work around – and usually it means you simply have to accept that that specific step is not going to work on a Microsoft-hosted agent.

- Self-hosted agents: that’s where I will refer to that section of the video I was talking about before ;-). It’s my favourite option, because this can be fast, cheap, redundant, flexible, debugable, … however you want. And as you can see in the video – it really doesn’t have to be difficult to set up a new agent.

- AzureVM: Microsoft has foreseen a nice and easy way for you to use a Azure VM as your build agent. Simply use the template: aka.ms/getbuildagent, fill in the parameters, and everything is done for you – a few minutes later, an agent will pop up in your agent pool. It can’t be done easier, in my opinion. But ..

So, what is your preferred way, waldo?

Well, as said, the Microsoft-hosted agents definitely not. fast running pipelines are important in my opinion, and this is certainly not a way to have it running fast. Just look at this build pipeline on a public project – the “start docker” will always pull the image and then run the build. Don’t get me wrong – the agent itself seems to be pretty fast (faster than some of my other self-hosted agents), but the fact that it needs to download the docker image every single time .. doesn’t make sense. If anyone knows a way to prevent this – I’m all ears.

For the same reason, AzureVM isn’t my favourite either. It’s great to showcase, and as a backup scenario (should you quickly need an extra agent). But in terms of DevOps build agents, in my opinion, Azure is slow and expensive. I would never use AzureVMs as a long term solution for my DevOps Build Agents.

So – I guess you know my preference:

Self-hosted agents

In essence, in this case, you have complete freedom on:

- The hardware: You want faster running pipelines? Invest in CPU power (Ghz, not number of cores). You want multiple agents on one machine? You can do it!

- Where it is hosted: your own data center? Under your desk? Some kind of naked motherboard with some memory and a CPU on top of your server rack (and yes, we do have this ;-))?

- What it is installed: you want docker or not? If docker, may be pre-load all necessary images at night?

So, the way I think about DevOps agents:

- They need to be easy to set up

- They need to be cheap

- They need to be as fast as possible

- I don’t care about redundancy of one server (I set up a pool of servers which make it redundant)

- I don’t care about failing hardware (it’s easy to set up, and again: there is a pool)

So, we have been investigating cheap cloud solutions, like hetzner.de, which offer cheap and fast cloud servers. I think these are ideal if you insist to have a cloud server as a DevOps agent.

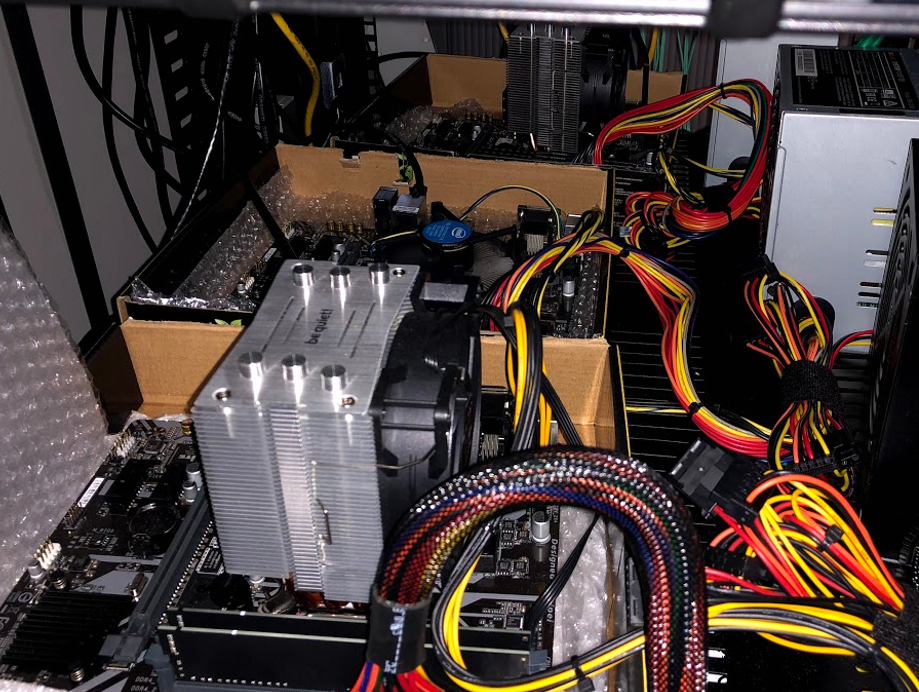

And we have been investigating some OnPrem configurations. This picture is a POC in our serverroom: 3 motherboards with different configs (fast Ghz / lots of cores / lots of RAM / fast M.2, …).

We found out that there is one actual really important parameter: that the CPU speed (not number of cores, not RAM, not Disk speed). So, now you know that, go get yourself the cheapest 5Ghz PC, and have some insanely fast builds ;-).

Disable Windows Updates

I know this sounds weird. But think about it. Windows updates can have a huge impact on the stability of whatever Docker image you are using for your build pipelines. Just take these blog posts from Freddy into account:

- https://freddysblog.com/2020/02/14/hyperv-isolation-to-the-rescue/

- https://freddysblog.com/2020/02/26/the-world-after-february-18th/

May be I don’t care about the hardware too much in terms of redundancy – but I do care about my pipelines running stable builds .. and clearly a simple windows update can mess up my build – all of a sudden, builds will start to fail. So my advice for a DevOps Agent (and actually any kind of NAV or BC installation) would be:

- Disable Windows Updates

- Update Windows in a controlled manner, like:

- Take a snapshot

- Update

- Run tests

- If tests fail, roll back snapshot

You don’t want your development to stop being able to build just because Microsoft changed how they are handling 32-bit applications in a February update.. .

DOAAAS – DevOps Agent As A Service

With ALOps, we’re thinking about providing a service for anyone that needs a fast DevOps Agent fast. A cloud service sort of speak, that people can use, where their code can be built fast. Speed is key. And cost should be minimum. And focus on AL – meaning:

- Pre-installed Docker

- Pre-loaded Docker images

- Best practices to optimize speed and stability for AL builds

- Controlled windows update

- Your license and code is secure

- …

What do you think – interesting? Or not worth the investment? Always nice to have feedback on this ;-).

3 comments

5 pings

When “implementation” ends then “support” begins. That’s where the all true “fun” starts.

Are the CPU requirements still current today? Reason I ask because we are highly considering the move to AlOps and want to order a build server/GAME

Any insights on the other system requirements for a dedicated build “server”?

Author

It’s still current, yes. I don’t like to give too much infrastructural advice, as it’s too much out of my league, sorry (I have a team managing that for me..).

[…] DevOps Build Agents for Microsoft Dynamics 365 Business Central […]

[…] might have read my previous blog on DevOps build agents. Since then, I’ve been quite busy with DevOps – and especially with ALOps. And I had to […]

[…] might have read my previous blog on DevOps build agents. Since then, I’ve been quite busy with DevOps – and especially with ALOps. And I had to […]

[…] might have read my previous blog on DevOps build agents. Since then, I’ve been quite busy with DevOps – and especially with ALOps. And I had to […]

[…] might have read my previous blog on DevOps build agents. Since then, I’ve been quite busy with DevOps – and especially with ALOps. And I had to […]